AGI isn't the hardest part

My thoughts on AI progress as a founder in the middle of it

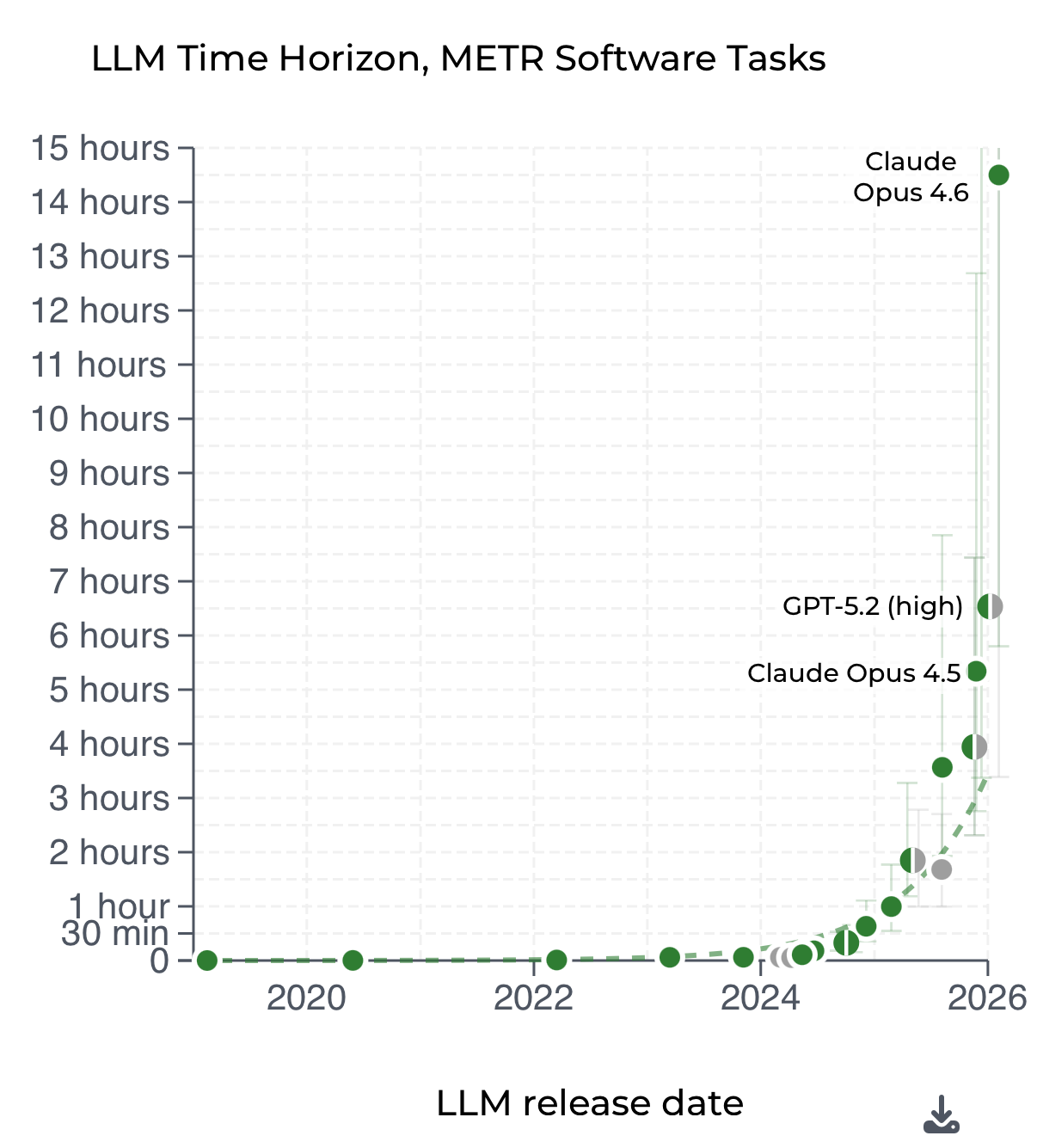

I swear that whenever I take a vacation, AI progress leaps forward 10x. After the last few months, I’m not sure if I should be taking more or less vacations. In February, we’ve seen incredible model releases (Opus 4.6, Codex 5.3) and application releases (Cowork, etc). We’ve also seen massive market cap destruction (the SaaS-pocalypse) and the largest percentage layoff of an S&P 500 company ever.

That’s some crazy whiplash. And if you’re paying attention, you’ll see that these are trends that have been years in the making. It makes you wonder what’s coming next.

Most of the AI labs are my customers, along with some of the largest companies deploying AI at scale. I live in San Francisco and can’t go to my local coffee shop or grocery store without seeing another friend who’s building an at-scale venture-backed AI company. And I spend way too much time on Twitter.

So what happens next? Is your company going to survive this? Are the layoffs just starting? How fast is this actually moving?

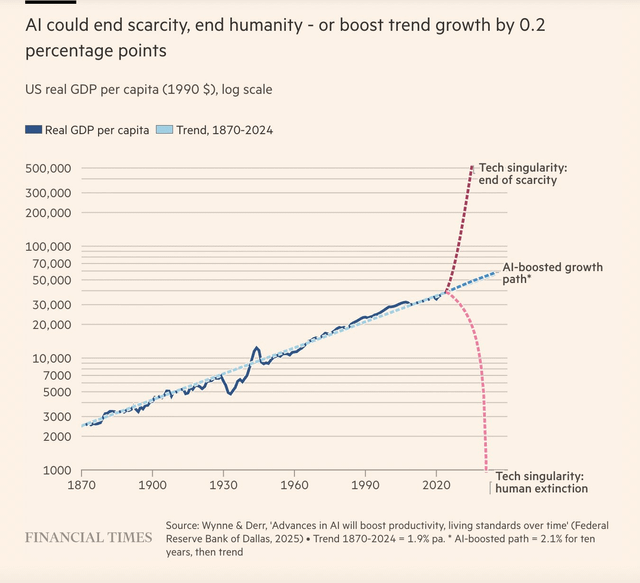

Bottom line up front: I believe full diffusion of AGI is about one decade away, and things will change at an accelerating rate alongside those 10 years, with the world on the other side of 2030 looking as different as it did pre/post 9/11, COVID, and World War 2.

To the AI people on Twitter, they’ll think 10 years is way too long. My average American probably thinks 10 years is too optimistic. Let’s dive in.

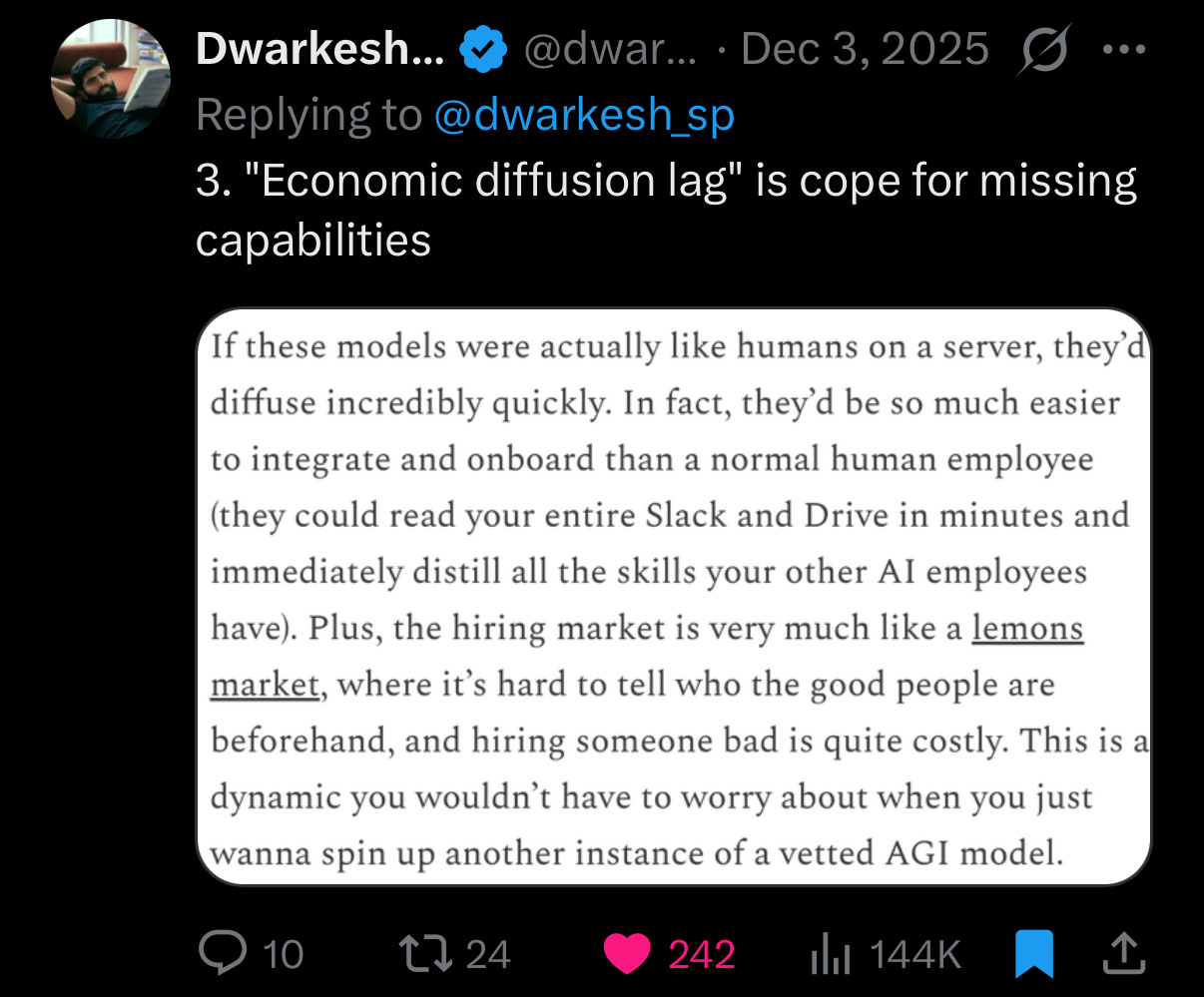

Diffusion is not cope

My recent number one source for good AI content has been Dwarkesh Patel’s podcast. He hosts some amazing guests and asks good questions. It’s like Joe Rogan for AI but less muscle mass and more brain power.

On a recent Dwarkesh podcast episode with Dario Amodei, Dario talked about how challenging it is to predict research progress, allocate resources, and balance research vs. deployment. He highlighted how beyond R&D, “diffusion,” the ability for us to put AI into the hands of individuals, remains a real challenge. Dwarkesh countered by saying “diffusion is cope,” that any sufficiently good AGI model would be able to be used reliably by any human to do any task.

I tend to agree with Dario. Even if the models can become good enough within a couple years (and I think they will!), it’s going to take a serious amount of human work to get to ubiquitous distribution. There are three reasons for this.

The models still aren’t good enough yet. The labs’ job isn’t done. It continues to get easier over time, but there are still global-scale logistics problems to solve.

The models just barely started being able to draw silly pictures reliably.Their coding has gotten REALLY good, but not good enough. I do believe that OpenAI, Anthropic, and DeepMind are working on this with good intentions and the risks in mind, but there’s real distance left to cover.

People and organizations resist change. Getting the world to adopt a completely different way of living and working will require a massive amount of education. We’ve made significant progress on the digital transformation of our society, but even that work isn’t complete. There are many systems that you cannot completely self-service digitally without having to escalate to a human. Voice AI will help with that. If you give an agent a credit card, web browser, email, and phone number, you can get a lot done. But it cannot open a bank account. Not yet, at least.

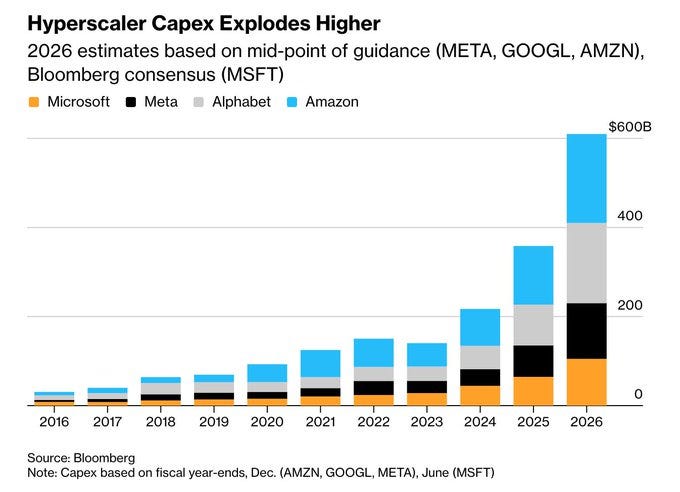

Physical infrastructure can’t keep up. Beyond the organizational challenge, you need to serve all these models (often called inference). You need GPUs. So many GPUs.

GPUs are really only one part of this. You also need data centers, and then you need chips, and then you need power. Power is the hardest one right now, and there are several incredible companies focused on revolutionizing the way we structure, store, and handle power. I’m incredibly bullish on them.

How fast can you build a power plant? What about 1,000 of them? Even if we’re able to get the latest AI, we’ll need to FUEL it with power. AGI might arrive but be impossible to distribute globally because of power and GPU demand to actually fuel it.

The STRUCTURAL barriers of human society and the PHYSICAL barriers of our infrastructure to power AI will create a LIMITING FUNCTION on how soon AGI will fully diffuse into our world.

We may get AGI sooner, but it will be unfairly distributed to those with capital and connections.

What this means for software

OK, so we’re 10 years from full AGI diffusion. What happens in the meantime?

It’s going to be harder and harder for software companies to win by just existing. Disruption is going to be easier than ever and starting a competitor is going to be easier than ever. Market cap is no longer generated by feature differentiation. Ability to execute at scale, brand, and customer trust will matter most.

There’s a popular idea in tech called the bitter lesson, which states that no matter what you do, a future iteration of AI models will also be able to accomplish that same task. People apply this to software all the time, saying “it isn’t worth building that piece of software because the next generation of models will simply generate it in one shot.” There’s truth to that.

Software land has benefited from “having more features” being a viable way to increase market caps and beat competitors. We had so much excess in software that building a company that was completely open source was actually a viable strategy, because it was so challenging to run infrastructure at scale. That era is over.

Predominantly software companies are going to face a lot of pressure to improve their ability to execute and retain market cap in the short term. Organizational restructuring, layoffs, risky product bets.

Don’t believe me? Look at how much of the S&P 500 is constituted of software companies, then look at the cash balances of those companies and you’ll see that they’re ready to weather this storm.

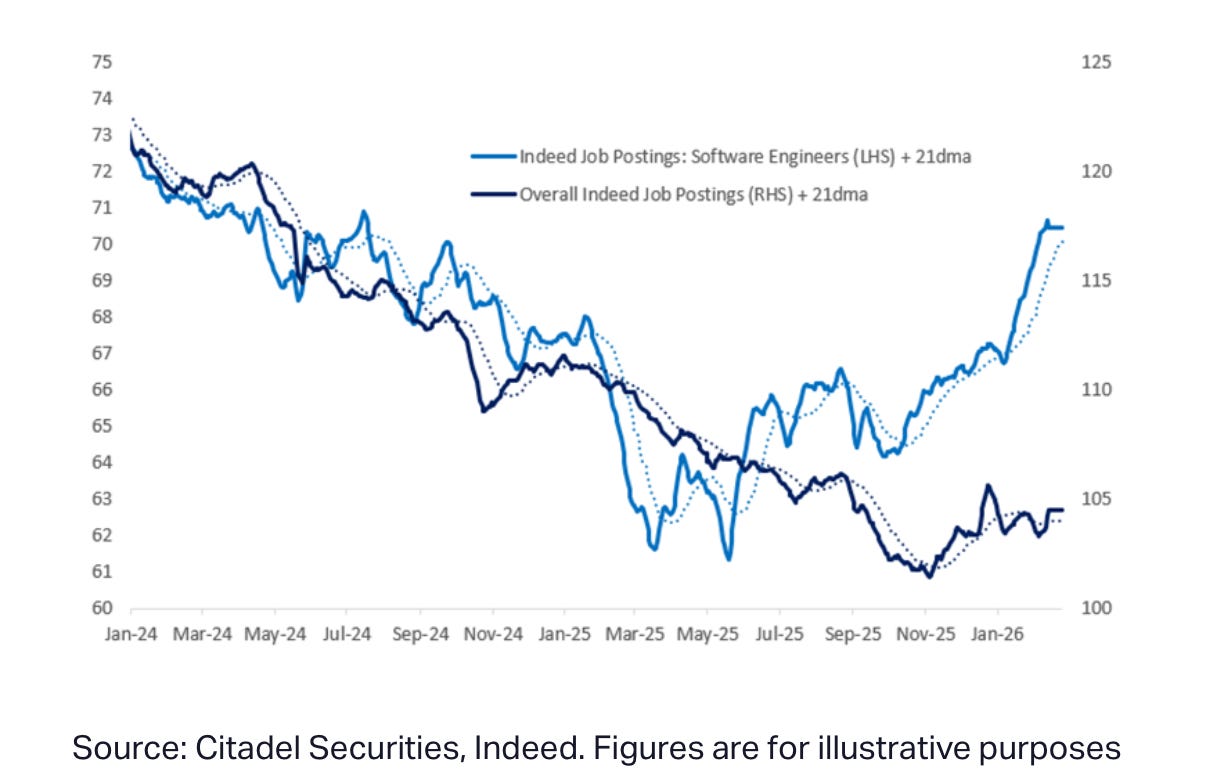

None of this means jobs disappear, though. People think AI is going to kill employment wholesale, and the data says otherwise. Software engineer employment is actually increasing.

AI can make it easier to replicate what software does, AND demand for human work can keep growing because more capable people unlock more demand. Which jobs change and how fast is the real question.

So what companies are “safe”?

I believe there will be only four types of companies in the future:

AI labs are companies turning compute into knowledge, then delivering that knowledge. There will be many types of labs. Some will specialize. But their primary function is creating and generating intelligence.

Infrastructure companies give the labs more capabilities and create the rails for that knowledge to run on. Power, internet, water, chips, and digital infrastructure too, like connection to payment rails or digital services via a browser.

Systems of record are the incumbent places where all of our current knowledge lives, is organized, and is accessed. They’ve become these giant systems that are very hard to move away from because the world is so entrenched on them. I think those continue to exist.

Services means humans doing services for other humans, or people employing agents to do service on your behalf. McKinsey is a services business. So is a hair salon. There’s a wide range, and there will continue to be.

Your business either already is one of these four types, or it will need to become one.

So are we screwed?

No. The most important thing you can do right now is not overreact, but certainly react. Think critically about how to start understanding these new types of technology and systems.

People can draw a very crazy line to a world that’s completely utopian or dystopian, but I think that’s unrealistic. It’s almost like the meme: nothing ever happens. The truth is somewhere in the middle. Things are going to change, and there will be turbulence, but it will only be truly impactful if you aren’t staying in the loop, understanding things, and growing yourself. You definitely cannot continue forward assuming everything’s going to be the same.

I really do liken it to COVID or other major changes in history. If we ignore what’s going to happen, it’s going to hurt. But actually taking an optimistic view, leaning into this future, being a part of it, being involved with it, I think that’s going to be a really huge advantage.

Regardless, the world will change. I guess I’ll need to take another vacation to see what happens